Mining companies are in the business of uncertainty: billions of dollars are spent on megaprojects with multi-decade time horizons. To say that mining projects are risky dramatically understates the situation. However, the topic of risk can be fraught with differences of opinion, experience, and emotion. What’s the single most important risk that could sink our project? Which trivial delay can cause a knock-on effect, delaying successful startup by a year? Which scary but immaterial risks are going to pull critical resources away from the things that really matter? What do we even mean when we say “risk?”

At Redteam, we spend a lot of time helping owners of large, complex projects define, understand, and manage risks. We start by recognizing and accepting that there is no industry-standard or consensus view for the term “risk.” The Project Management institute defines risk as “an uncertain event or condition, that if it occurs, has a positive or negative effect on one or more project deliverables” [1]. Focusing only on negative outcomes, Engineers Canada defines risk as “the possibility of injury, loss, or environmental incident created by a [potential source of harm]” [2]. Going much further into specifics, the Society for Risk Analysis provides a definition that includes seven qualitative definitions and eight quantitative descriptions of risk, encouraging people to use the definition of risk that best matches their situation [3,4]. At Redteam, we take a similar “fit for purpose” view – the most appropriate definition of risk is the one that works best to preserve and protect your specific project objectives. What could prevent you from meeting stakeholder expectations over the life of this mine?

After defining what risk means for a particular mining project, we turn our attention to understanding the nature of the risk within that project. A conceptual understanding of project risk is critical because with a clear understanding of how and why risk occurs, organizations can match the best risk mitigation and management approaches to their specific circumstances.

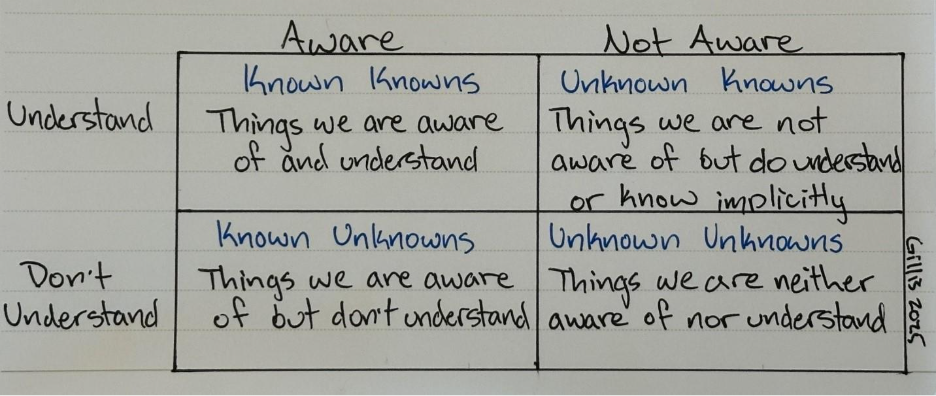

In 2002, US Secretary of Defense Donald Rumsfeld popularized the phrases “known knowns,” “known unknowns,” and “unknown unknowns” when asked about the lack of evidence for weapons of mass destruction in Iraq. Although popularized in the modern context by the US Defense establishment, the concept likely goes back more than two thousand years when Socrates spoke of recognizing his own ignorance [5]. More recently, the “Rumsfeld Matrix” has been established to describe the interaction of awareness and understanding.

The Rumsfeld Matrix [6]

While this may be useful in a social or political context, it doesn’t sufficiently describe different types of risk a mining company can face, nor does it lead to obvious solutions. For example, mining companies are rarely fortunate enough to benefit from implicit, unknown knowledge when it comes to things that really matter for a mining project, like ore grades or 10-year metal price trends.

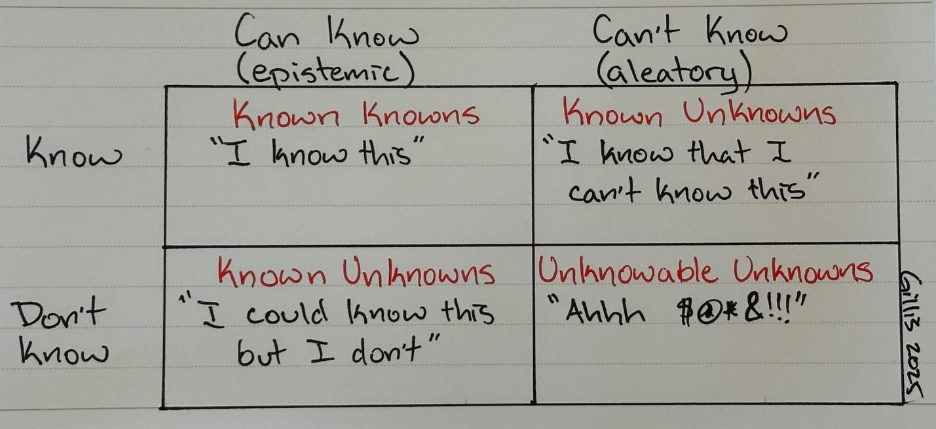

In a mining context, it’s more helpful to categorize uncertainty in terms of epistemic uncertainty and aleatory uncertainty. Epistemic uncertainty refers to a lack of knowledge, whereas aleatory uncertainty refers to a lack of predictability. We’ve summarized this in a 2×2 matrix below – along one dimensions you have things that you do or don’t know, and along the other dimension you have things that you can or can’t know.

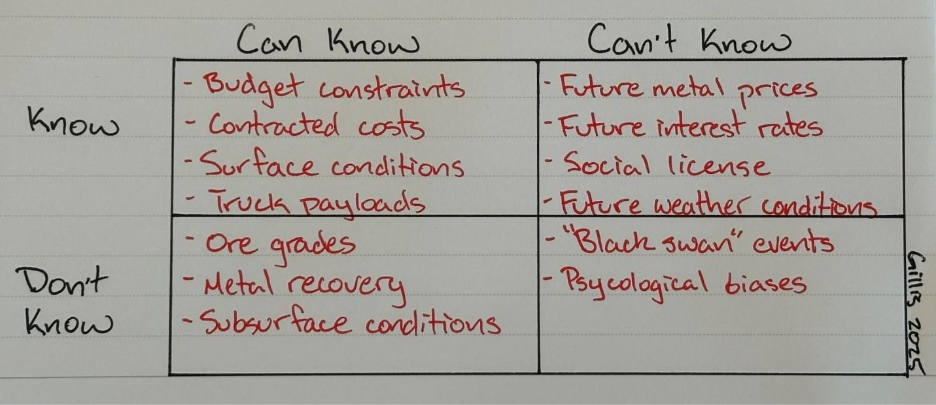

If that seems too abstract, here are some examples considering the planning phase of a mining project:

When you know something, it’s because the information is predictable or unchanging and reasonably attainable. You know how much cash you have on hand; you know the surface elevations and drainage of a prospective mine site; you know (usually!) what you’ll have to pay after signing a fixed-price PO.

When you could know something but don’t, it’s because the information is predictable or unchanging, but it isn’t reasonably attainable. You could know the true grade and recovery of an ore deposit; in fact, you will know this by the end of the mine life. However, it’s prohibitively expensive to get the true information before starting the mine, so we estimate and model. Although presented here as a discrete change, the dimension of what you can know is a continuum. It’s based on the how resource-intensive it will be to get the information, and how much time, money and effort you can afford – think about companies that start mines based on a Preliminary Economic Assessment. Knowing when more information is likely or unlikely to change a decision is a critical element in epistemic risk management and project resource allocation.

When you know you can’t know something, it’s because you know the information will change unpredictably in the future. What’s the price of gold going to be on February 29th, 2036? What will the social and political situation be in Greece over the next 5 years? (We’re rooting for you, Eldorado!). What’s the weather going to be next Tuesday? While these can be critical project factors, we understand that we can’t truly know the answer (although there are many psychological biases that often make us think we can).

When you don’t know something and couldn’t have known it, it’s because the event or impact is so far outside the regular course of business that it’s reasonably unforeseeable, or because there’s a psychological bias preventing everyone from seeing it. A reasonably unforeseeable impact can be a combination of the event and the severity. For example, many experts could foresee a global pandemic happening at some point, but the timing and magnitude of COVID-19 was unforeseeable. I suspect container ships get stuck from time to time but one getting stuck in the Suez Canal and disrupting global supply lines was a big surprise. Natural disasters, acts of violence, financial market collapses – the list could go on and on and on with no discernable pattern or connection between the events and consequences. In addition, there are a variety of psychological biases that everyone, everyone succumbs to. These were studied by Daniel Kahneman and Amos Tversky starting in the 1970’s and include the planning fallacy (ignoring representative information and discounting past errors when making predictions); prospect theory (risk aversion vs risk seeking behaviour); anchoring (being inappropriately influenced by a starting value); and conjunctive risk perception (underestimating many low probability risks).

How can we manage and mitigate these different types of risk?

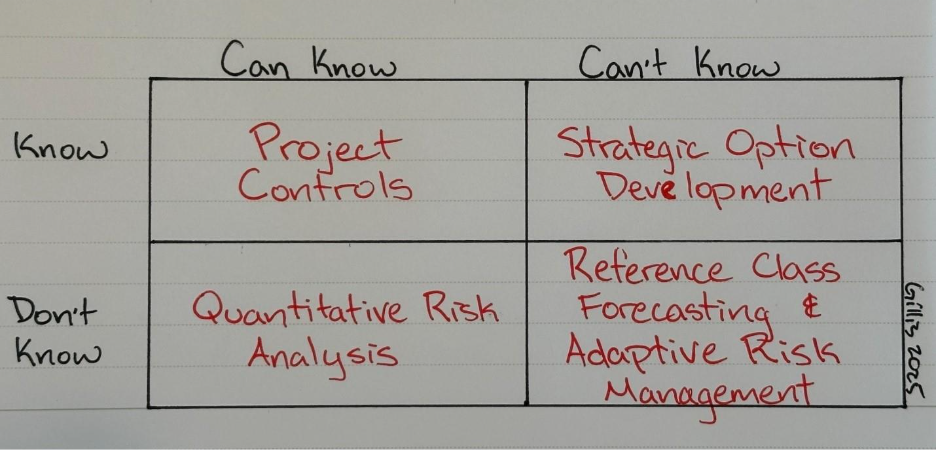

Now that we have a framework for thinking about risk in mining projects, what can we offer as appropriate approaches and methods for dealing with each of these risk categories? Below is a framework identifying the primary risk management strategy that Redteam applies for each category of risk. Like the categories of risk, the approaches to risk management are on a spectrum; we use combinations and complements of these approaches to effectively address the risks being faced.

For things that we know, the goal is to keep actions and outcomes aligned with expectations. A large and complex project is like any other physical system – entropy will naturally increase over time and things will spread out in every direction unless energy is applied to keep things in order. In a project context, we apply this energy in the form of Project Controls. First, we create performance benchmarks that reflect the approved project plan. Then, we monitor activities and outcomes against these benchmarks, analyzing deviations and understanding the potential consequences. Finally, we implement corrective actions as needed to bring the project back in line with the planned budget and schedule.

For things we could know but don’t, we go through a structured Risk Identification Process and then model the uncertainty using a Quantitative Risk Analysis. This approach combines budget uncertainty, schedule uncertainty, and discrete risk elements to build a comprehensive project risk model. For example, to understand the risk associated with a major capital project we can take the range of estimated costs and durations for the major activities, along with discrete risks like weather events and permitting delays, to create a risk model that identifies the factors with the highest potential impact on overall project budget and schedule. This not only includes the relative impact of each activity, but also the interaction of risk among all dependent activities. The information needed to construct the risk model can come from an existing distribution of data (e.g., quotes from several vendors, block model confidence intervals), historical data (e.g., schedule overrun for similar activities in the past, pilot plant vs actual recovery for similar ores) or professional judgment. By quantifying this type of project risk, we can understand the value of increasing our knowledge and reducing uncertainty. Examples include additional drilling, running pilot plants, and committing to capital purchases.

For things we know that we can’t know, it is critical that we accept and respond to the inherent uncertainty. It is too easy and common to pretend the variability doesn’t exist by picking a value (e.g., “the market/expert consensus”) and creating plans based on that unchanging assumption. A more useful approach is Scenario Planning & Option Development, where we understand the likely range of uncertainty over the project time horizon and develop real options that can be exercised or discarded depending on how the future unfolds. To understand the likely range of uncertainty, we can take historical information from similar projects. For example, if you want to know how likely it is that you will experience weather delays for a project in the Canadian Arctic, you can look at the frequency and duration of delays for past projects. If you want to understand the variability of metal prices over the first five years of operation, look at the variability of metal prices in the first five years of operation for mines producing a similar mix of commodities. Next, we identify different strategies that will be successful depending on how the future unfolds and develop strategic options that can be exercised or discarded. For example, imagine a situation where a mining company holds a gold property that has better heap leach economics at the lower bound of gold price variability and better milling economics at the upper bound. This company could create a Strategic Option by placing deposits on long-lead milling equipment while in the permitting phase and then proceed with mill procurement and construction if the gold price is high when permitting is complete or let that option expire and proceed with heap leach construction if the price is low.

Finally, for the unknowable unknowns, the best we can do is an overall estimate of project-level risk based on the unusual events and psychological biases that have plagued projects in the past. We do this via Reference Class Analysis, where historical information about costs, benefits and schedule for similar projects is used to create a distribution of project performance. First, we use professional judgement to identify similar projects from the past (the “reference class”). Next, we compare performance to the original forecast for each project in the reference class and create a probability distribution identifying the likelihood of cost and schedule overrun and benefit shortfall. For example, we can say something like “there is a 50% chance the project will be 15% over budget and 3 months late based on the performance of similar projects in the past.” While Reference Class Analysis can’t tell you specifically what might go wrong or what to do if something does go wrong, it can quantify the inherent risk in the project and can give you an opportunity to plan for it (e.g., set aside some financial reserves, plan for a winter startup).

But what if a completely unexpected event occurs? We respond with an Adaptive Risk Management process. First, we identify and characterize the risk caused by the event. What happened, what are the current impacts, and what are the potential knock-on effects? Next, we develop parallel, staged, and iterative response options that can be pursued and evaluated simultaneously, while building a model to assess the value and benefits of pursuing each path as we gather more information. What’s worth trying, and what can we learn from trying each option? Next, we execute the plan, updating the model to prune the response options as we learn and iterate until the best response alternative is identified.

As you may have identified in these descriptions and examples, each tool has a supporting role to play in most of the other risk categories. Reference Class Analysis can collect probability data for Quantitative Risk Analysis, Quantitative Risk Analysis plays a key role in Project Controls development, Adaptive Risk Management and Strategic Option Development are similar concepts applied with different time horizons, and so on.

American Engineer and inventor Charles Kettering coined the popular phrase, “a problem well-stated is a problem half-solved,” and this certainly applies to problems of risk. Without a clear understanding of the type of risk being addressed, it’s difficult to select the most effective approach. However, when the varied and complex risks being faced by mining companies are clearly understood and characterized, the most appropriate tools for risk management can be brought to bear.

References:

- Project Management Institute. (2017). A guide to the Project Management Body of Knowledge (PMBOK guide) (6th ed.). Project Management Institute.

- Engineers Canada. (2020). Public Guideline on Risk Management.

- Society for Risk Analysis. (2018a). Risk Analysis Fundamental Principles. In Society for Risk Analysis.

- Society for Risk Analysis. (2018b). SRA Glossary. In Society for Risk Analysis.

- Rozell, Daniel J. (2020). Dangerous Science: Science Policy and Risk Analysis for Scientists and Engineers. Ubiquity Press. p. 48. ISBN 9781911529897.

- Saravanan, R. (2021). “Chapter 13: The Rumsfeld Matrix”. The Climate Demon: Past, Present, and Future of Climate Prediction. Cambridge University Press. pp. 193–211. doi:10.1017/9781009039604. ISBN 9781009039604.